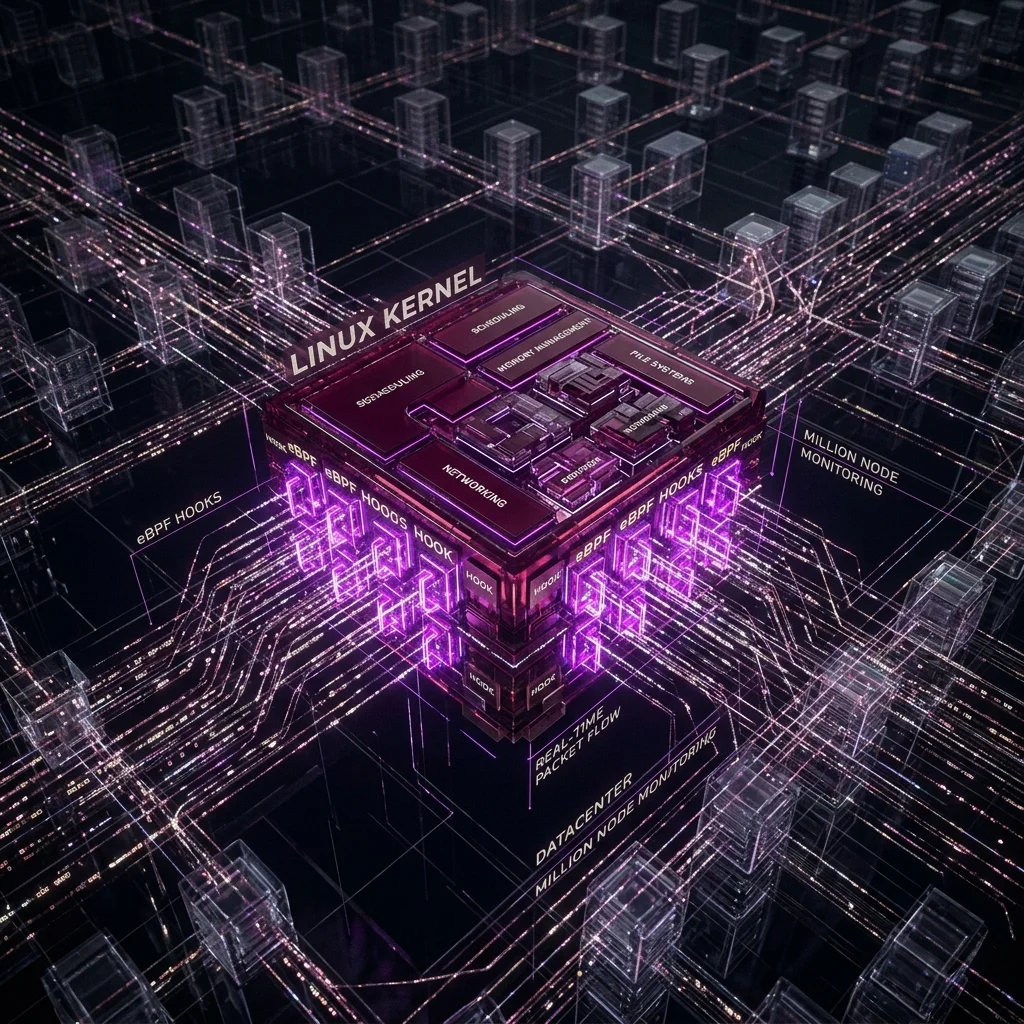

At a million nodes, traditional observability architectures collapse. A sidecar agent consuming 0.5% CPU becomes 5,000 full CPUs of overhead. A 10KB per second metrics stream becomes 10GB/s of internal traffic. The math does not work. We needed a different approach.

Why does traditional agent-based observability fail at datacenter scale?

Every agent is a process. Every process has overhead: memory footprint, scheduler time-slices, filesystem opens, network connections to the collection backend. At 1,000 nodes this is invisible. At 1,000,000 nodes the aggregate overhead is a datacenter of its own.

Beyond compute overhead, agents have a deployment and version management problem. Rolling out an agent update across a million nodes is a multi-week operation with significant blast radius. A kernel-native approach eliminates both problems simultaneously.

How does eBPF enable zero-overhead observability?

eBPF programs are JIT-compiled bytecode that runs directly in the Linux kernel — in the same execution path as the system call, network packet, or scheduler event being observed. There is no context switch, no IPC, no userspace agent. The measurement overhead is a few dozen nanoseconds per event, comparable to a cache miss.

What events does the pipeline capture?

- 01Network: per-flow latency, retransmissions, ECN signals, congestion window evolution

- 02Storage: per-request IOPS, latency histograms, queue depth, device error rates

- 03Scheduler: CPU runqueue latency, context switch rates, involuntary preemptions

- 04Security: execve auditing, privilege escalation attempts, unusual syscall sequences