Running a 70B parameter model in production is not a software problem — it is a systems problem. The model weights alone consume 140GB of VRAM. At our target throughput of 500 requests per second, naive single-GPU inference would require 480 A100s with zero coordination overhead. The actual challenge is the coordination overhead.

What is the core challenge of distributed inference?

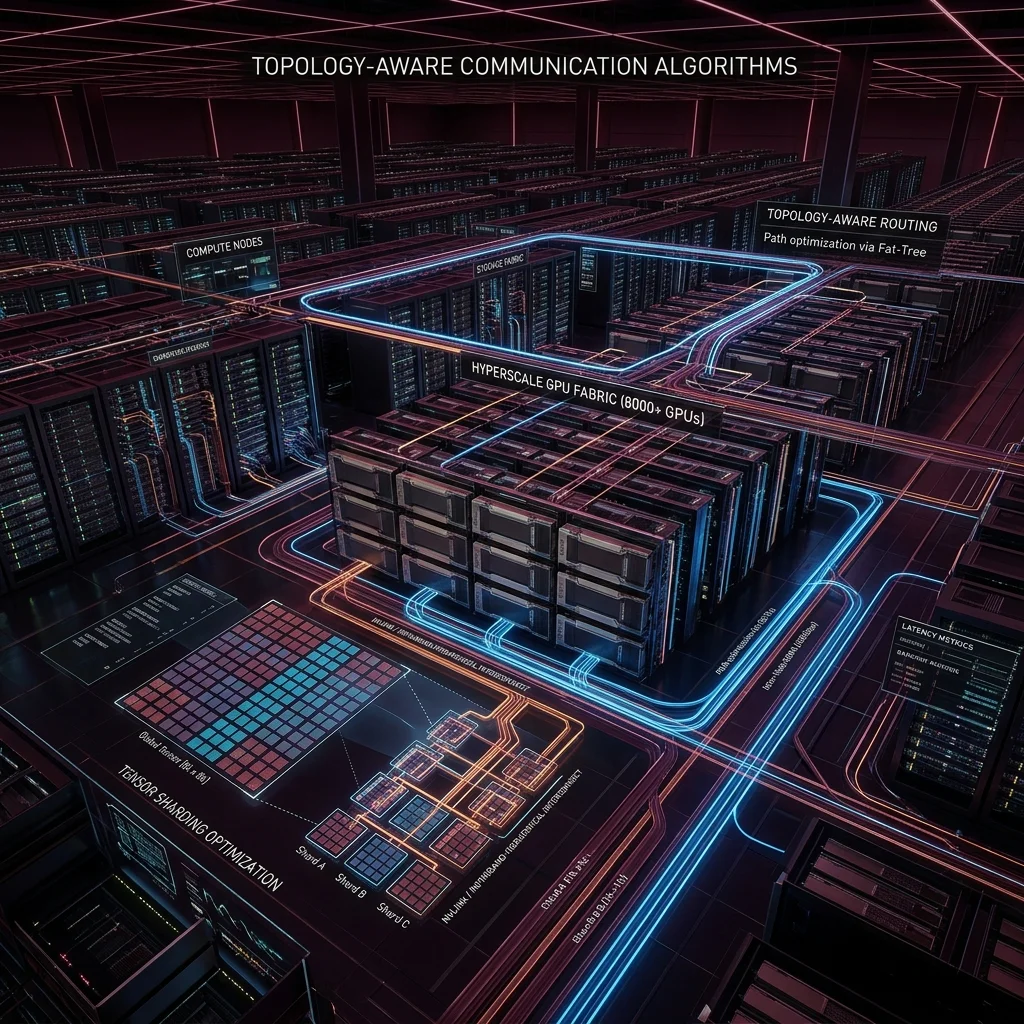

Distributed inference requires partitioning both the model and the computation across multiple accelerators while keeping inter-device communication costs below the arithmetic compute time. Get the balance wrong and your GPUs spend more time waiting for network transfers than doing matrix multiplication.

The two primary parallelism strategies are tensor parallelism (splitting individual layer weight matrices across devices) and pipeline parallelism (assigning different transformer layers to different devices). Tensor parallelism dominates for latency-sensitive workloads; pipeline parallelism for throughput-bound ones. We needed both.

How does topology-aware NCCL reduce communication overhead?

NCCL (NVIDIA Collective Communications Library) performs the all-reduce operations that synchronize activations across tensor-parallel ranks. By default, NCCL selects communication algorithms based on message size thresholds — but it is unaware of the physical interconnect topology.

Topology-aware collectives: route NVLink traffic within nodes first, then InfiniBand across nodes. This single change reduced all-reduce latency by 41% in our 512-GPU configuration.

What is KV-cache prefill scheduling and why does it matter?

The KV-cache stores key/value tensors for all tokens in the context window. For long contexts, KV-cache memory consumption dominates — a 32K context window with a 70B model requires ~52GB of KV-cache per request in fp16. Without intelligent scheduling, cache thrashing destroys both latency and throughput.

Our prefill scheduler uses a priority queue that batches requests by estimated KV-cache size, sequences them to maximize cache reuse across requests with shared prefixes (system prompts, RAG context), and implements a chunked prefill strategy that overlaps prefill computation with decode for in-flight requests.

What were the final latency results?

Baseline (naive tensor parallelism, no scheduling): p50 = 180ms, p99 = 2,400ms. After topology-aware collectives, chunked prefill, and KV-cache scheduling: p50 = 48ms, p99 = 310ms. The p99 improvement of 87% was primarily driven by eliminating cache thrashing in tail-latency scenarios.